We’ve got a thing that’s called radar love

Google’s Advanced Technology and Projects division (ATAP) first announced Soli, its radar-based gesture technology project, almost seven years ago. Although it sounded really promising, the implementations we got never seemed to quite live up to its potential — the Nest Hub (2nd generation), Nest Thermostat, and Pixel 4 all feature Soli tech, but the project has otherwise been low-key. Google apparently isn’t done with Soli yet, and a recent ATAP video sure suggests it might be an integral feature in future devices.

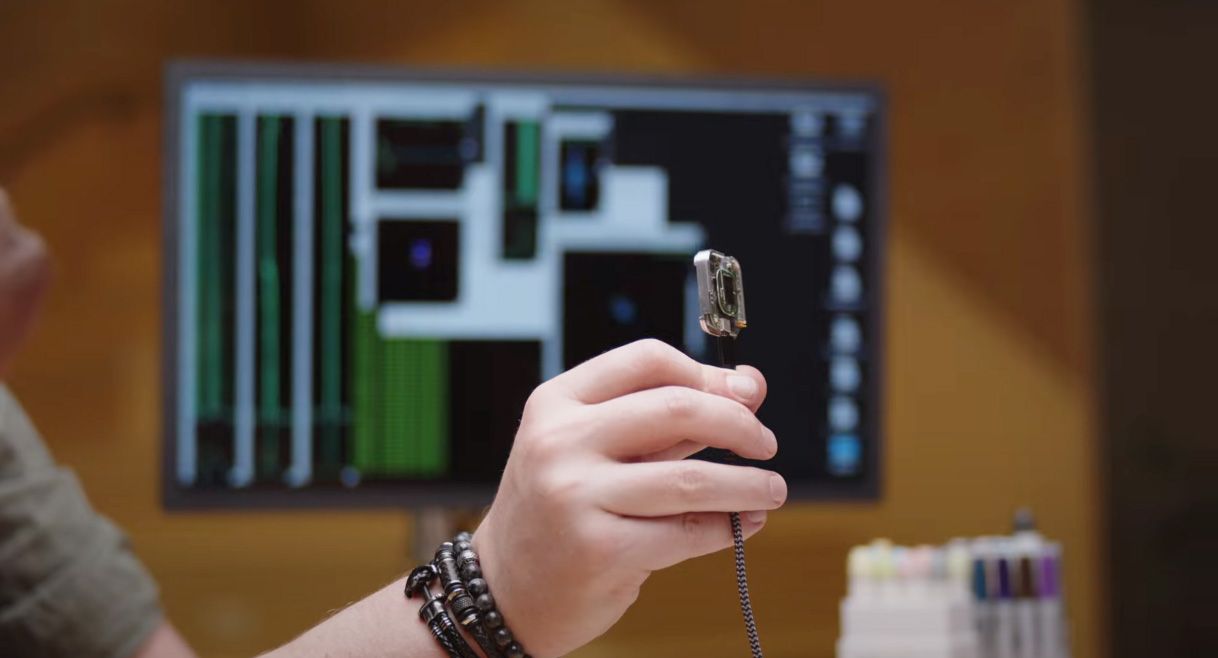

Some intriguing hardware is used to demonstrate the work ATAP’s been up to, including a neat square-shaped smart display mounted by a door that shows the external temperature then changes to warn rain is on the way when someone walks by. A similar but larger (and more rectangular) device shows up in another scene, helping to take calls.

We also see Google training Soli to understand simple nonverbal actions, with the goal of learning how to interpret the social context of various movements. Soli will learn, it seems, in a truly nuanced way. As ATAP head of design Leonardo Giusti notes in the video, humans can often understand each other without speaking at all, so what would it be like if computers could understand us in the same way?

While the hardware that appears here is a mix of mock-ups and development boards made to demonstrate where this technology could go, it’s also apparent that Google’s goal is to make Soli helpful but unobtrusive — something we could integrate naturally into daily environments. A broader goal seems to be making as many devices hands-free as possible.

If you liked this insider look, Google says this is the first episode of a documentary series that will show off all sorts of cool stuff that ATAP has been up to — we can’t wait to see what’s next.

Read Next

About The Author