Gemini’s promise of cutting-edge reasoning and seamless cloud integration was too good to pass up — or so I thought.

However, as the local model landscape shifted with the arrival of heavy hitters like Gemma 4, Qwen3.6, and more, I started to wonder whether I really need to continue my $20 a month subscription.

I decided to pull the plug. I migrated my entire daily workflow to a local-first stack running on my own hardware.

A couple of weeks later, the subscription is gone, my privacy is back in my hands, and surprisingly, my output hasn’t dropped a bit.

Here’s how I built a local AI powerhouse that finally made the cloud feel optional.

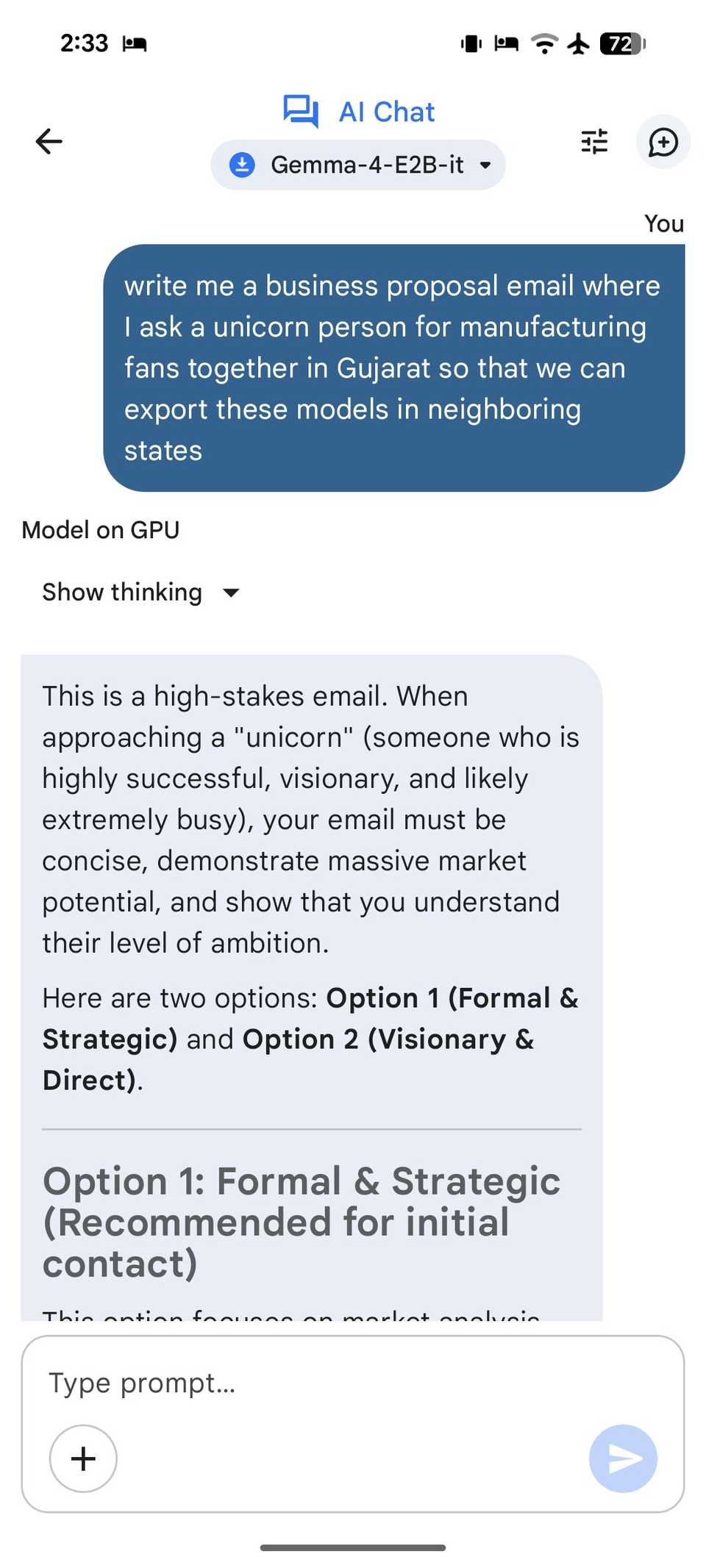

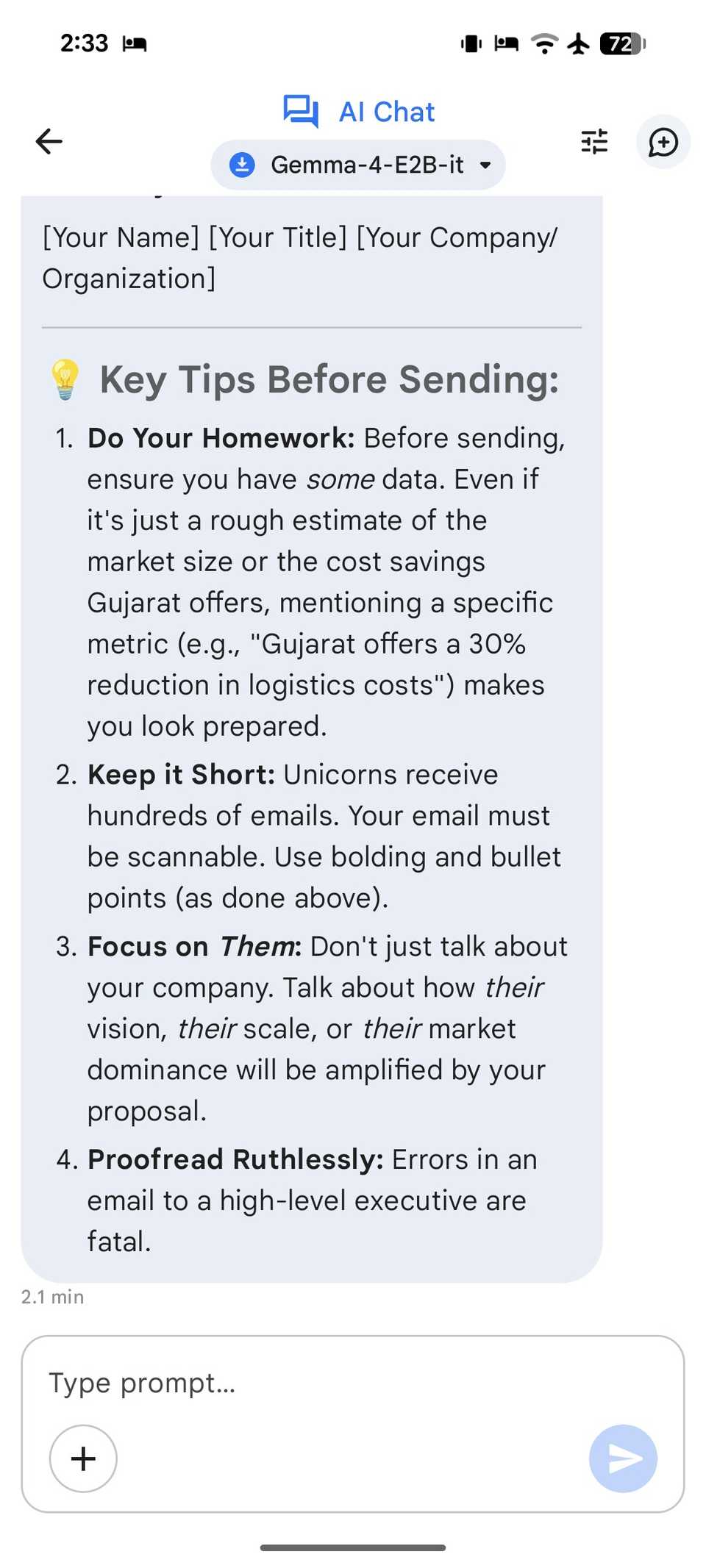

Gemma 4 E2B and 26B A4B

Gemma is essentially the open-weight sibling of Gemini, and with the release of Gemma 4, the performance gap has practically closed for my daily workflow.

The beauty of Gemma 4 is that it isn’t a one-size-fits-all model. For my setup, I rely on two specific versions that handle different parts of my life: the E2B for my mobile workflow and the 26B-A4B for the heavy lifting on my desktop.

The E2B model is a marvel of mobile engineering. If you are an Android user, you can run this fully offline via the Google AI Edge Gallery app. It only needs around 1.5GB of RAM to function properly on your device.

Whether you want to quickly draft a professional response to a client about a jewelry shipment delay or summarize a dense PDF, you can get the job done even in a dead zone with no signal. It’s good and quick enough for handling daily casual tasks.

However, when I’m back at my desk with a dedicated Windows setup, I rely on the powerful 26B-A4B model. Although it has 26 billion total parameters, it only uses about 3.8 billion per token.

I can feed it a 50-page PDF of a new technical whitepaper, and it can summarize specific sections or find contradictions across the document in real time.

Last week, I was planning a weekend trip for my family. I copied and pasted the text from three hotel websites and two travel blogs into the chat.

Instead of me scrolling back and forth to compare prices, I just asked the model:

Create a table comparing these five hotels based on distance from the lake, breakfast options, and whether they have a kids’ play area.

It built the table perfectly in under a minute.

By splitting my tasks between the Edge and the Desktop (via LM Studio), I have replicated the Gemini experience for $0 a month.

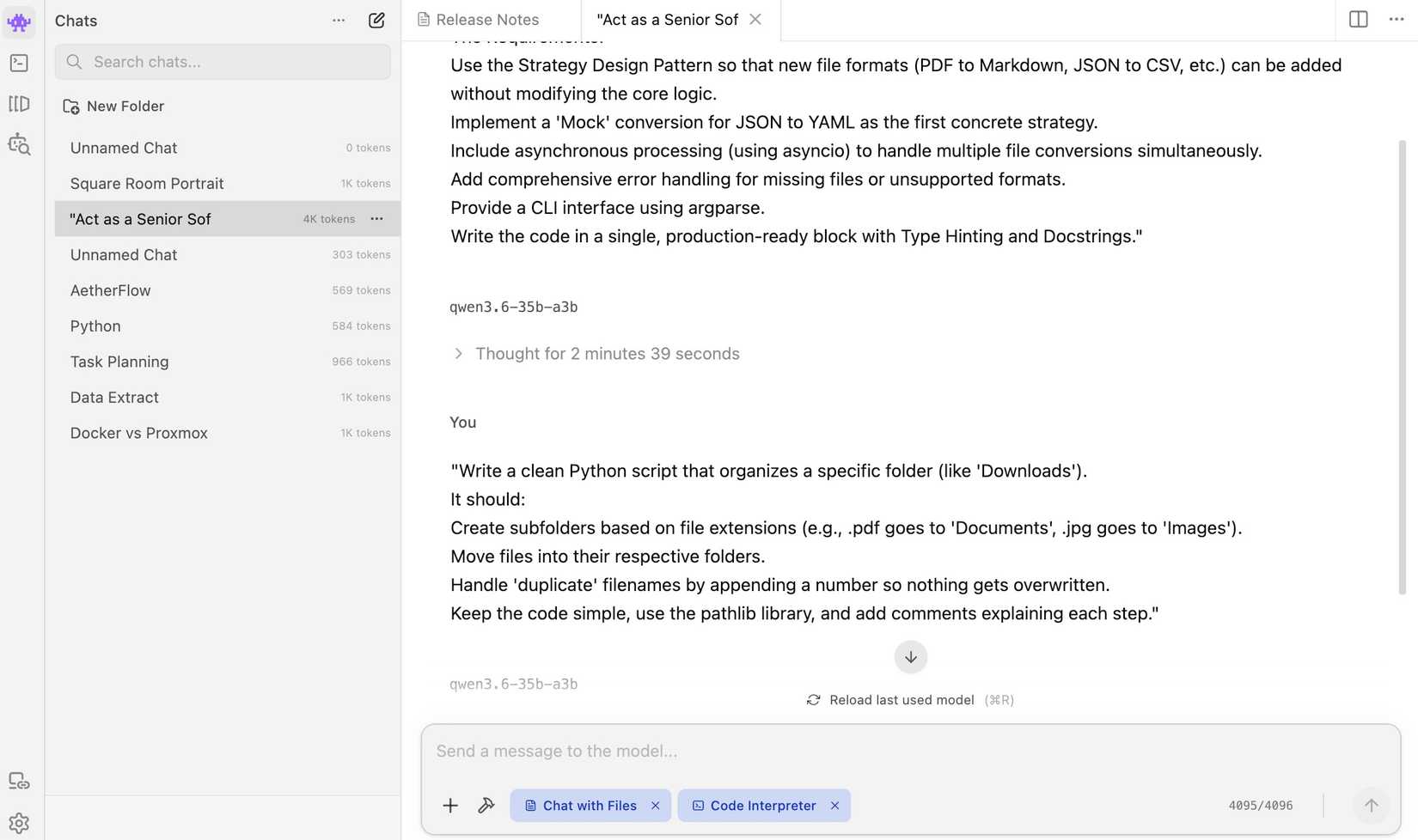

Qwen3.6-27B

While Gemma handles my daily reasoning, Qwen3.6-27B is the senior developer on my local team.

If you are a developer who needs high-end coding assistance, this is the model that will finally let you cut the cord.

Agentic fluency is where Qwen 3.6 really shines. It doesn’t just suggest the next line of code and call it a day. It understands repository-level context.

I can feed it multiple files from a project, and it won’t forget the utility functions I defined three files ago.

I often use Qwen as a local Terminal-Bench specialist. Because it’s trained specifically for agentic terminal work, it’s quite good at automation.

Because it’s a 27B model, I can run it at near-instant speeds on my HP Spectre with a decent mobile GPU, or at lightning speed on my home lab server.

By using LM Studio and offloading the layers to my GPU, I have turned my local machine into a coding powerhouse that rivals anything the cloud can offer.

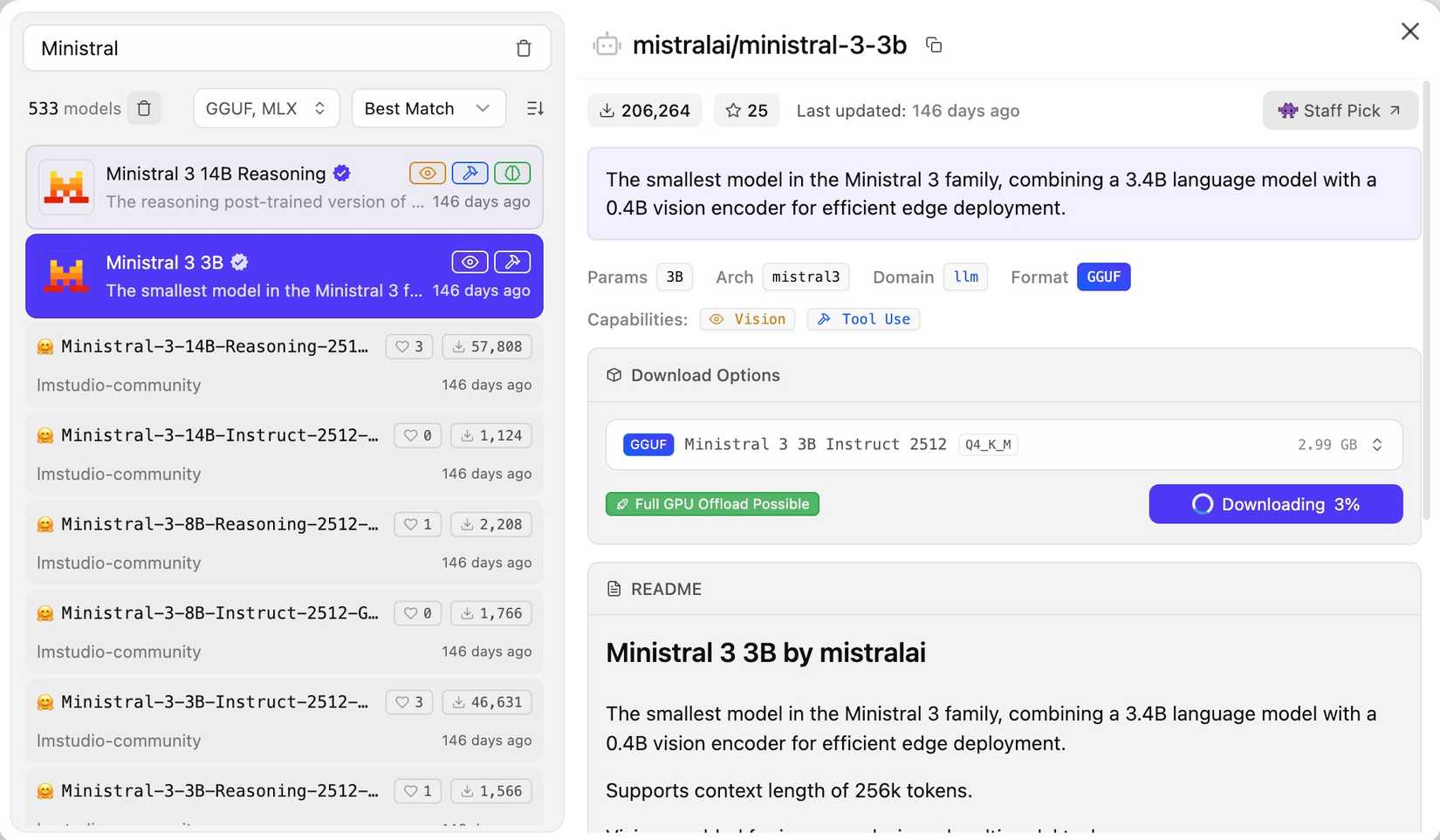

Ministral 3 3B

If Gemma 4 doesn’t work for you, try Ministral 3 3B. It’s another high-performance alternative that proves size isn’t everything.

It delivers nearly instantaneous text generation that makes cloud-based AI feel slow.

Most models start to lose the plot when you give them a long thread of messages. Ministral 3B maintains a surprising amount of coherence for its size.

It’s the model I keep pinned to my taskbar for the hundreds of micro-tasks that fill my day.

Also, in my limited time, the answers feel more like a tool and less like a lecture experience.

By keeping Ministral 3B as my always-on assistant, I have realized that 90% of my AI needs don’t require a trillion-parameter giant in the cloud. They just need a smart, fast, and local 3B model that does the job.

I thought Google Docs was enough until I paired it with Gemini

The secret weapon for flawless documents

Local-first productivity

Overall, ditching Google AI Pro turned out to be an upgrade. By moving my daily operations to a local-first stack, I have created a setup that is faster, more private, and entirely my own.

Now, local AI is no longer a compromise; it’s a professional powerhouse capable of handling everything from complex coding to deep research. The overall output and performance entirely depend on the hardware you are using.

These are just my personal recommendations. You shouldn’t limit yourselves to these models only. You can always try other local LLMs that fit your needs and hardware setup, and ditch these expensive monthly plans for good.